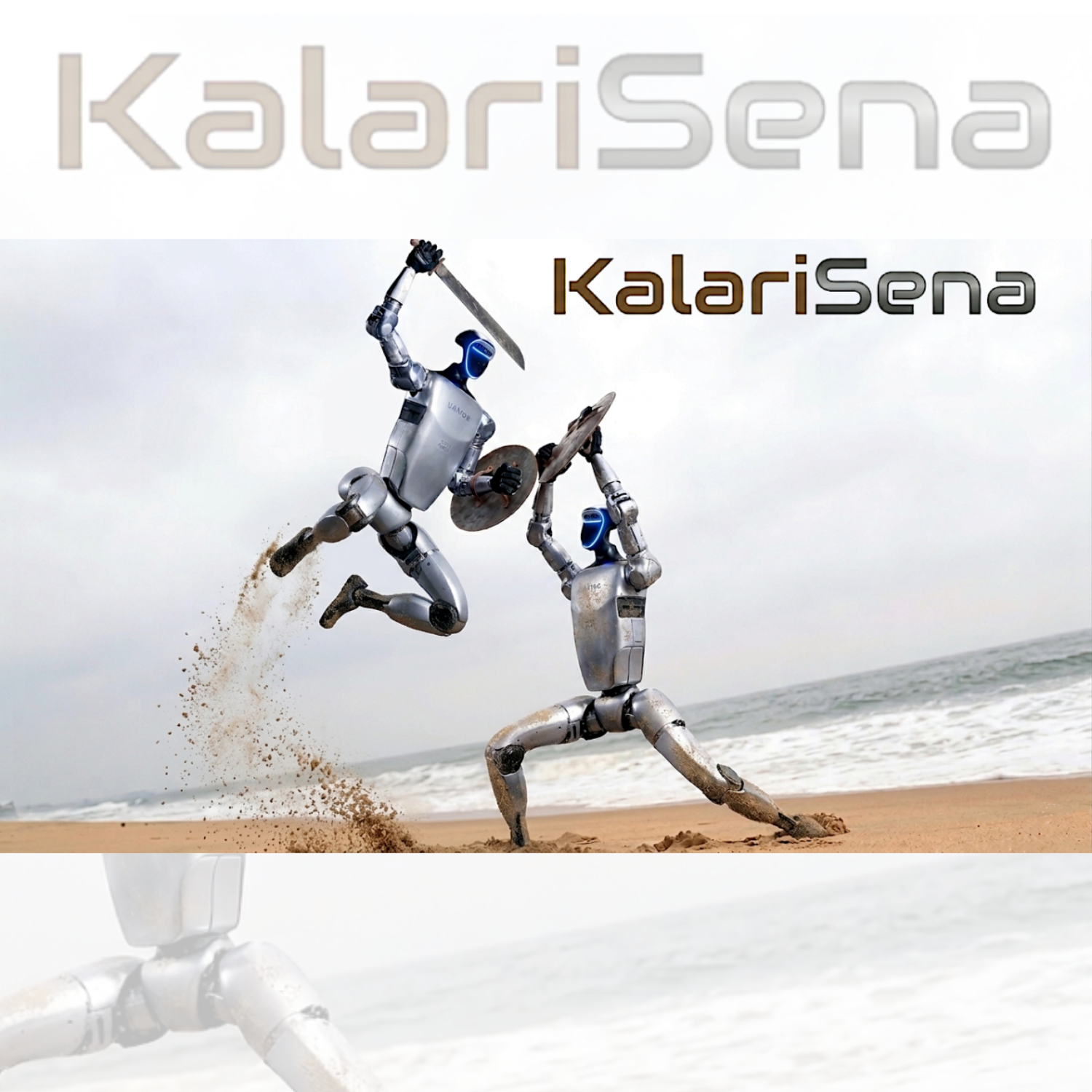

KalariSena

KalariSena is our movement-intelligence and embodied skills program for humanoid robotics, centered on teaching Indian martial art—Kalaripayattu—to robots. It translates the principles of agility, balance, reflexes, defensive movement, rapid response, and whole-body coordination into machine embodiment, enabling humanoids to move with greater precision, control, and physical discipline in complex real-world environments. By grounding robotic motion in a structured tradition of combat-tested movement, KalariSena aims to build faster, more resilient, and operationally capable humanoid systems for deployment in railways, airports, Kumbh Mela-scale gatherings, crowd-control scenarios, security at Indian monuments, and other dense public settings where mobility, responsiveness, and embodied reliability are critical.